We live in times of impressive progress in AI (driven mainly by advances in Deep Learning). It is a broad and rich path with a large potential for applications. Having worked with neural networks for more than 15 years, I cannot yet see firm limits of where this path can take us. Neural networks (Deep Learning) are based on universal function approximation and being universal is…well, quite a powerful promise for a technology to make.

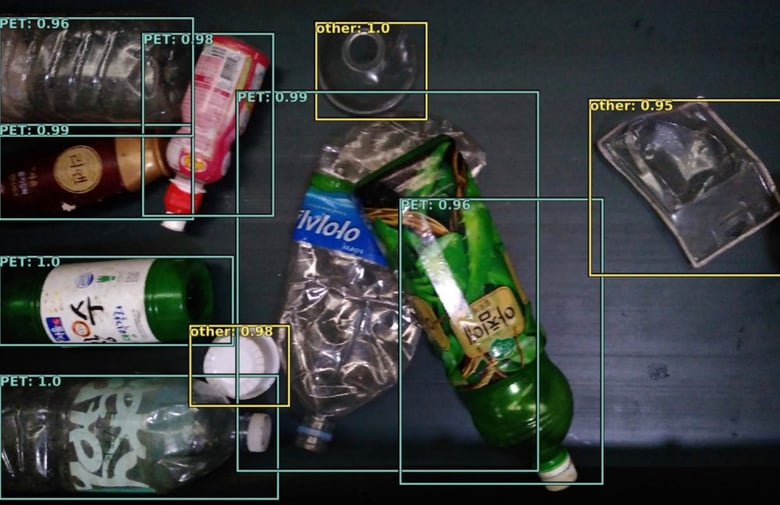

Thinking of applications, an interesting question that I came across, together with my friends at Greyparrot , is: Can Deep Learning be used for effectively recognizing recyclable objects and materials? If so, can it be done robustly across different environments and which methods can further improve recognition performance and scalability?

From a machine learning perspective, the visual recognition of waste can have some interesting properties and challenges:

- High variation — Waste is usually deformable (crushed bottles and cans) and can be modified in other ways

- Out-of-distribution — recyclable waste is a subset of all waste, which can be anything

- Long tail distributions — some waste is very frequent, sometimes most is rare

- Unlabelled data is plentiful — waste is everywhere

Importantly, Deep Learning as a research field, is still undergoing great expansion. New architectures and methods are significantly pushing the boundaries on visual tasks almost monthly (one example: EfficientDet: Towards Scalable and Efficient Object Detection). This is why I think we are unlikely to soon see a practical ‘Deep Learning SDK’, as it would become obsolete too quickly. This is why being aware of latest research and improving it, will be quite relevant for tackling these and related problems in the foreseeable future.

When confronted with a real-world challenging visual problem such as waste recognition, specific methods (some recent, some not) become relevant. We are investigating some of these, including:

- New DL architectures (one example in this post)

- OOD methods: Can the model know what it doesn’t know and avoid making a decision?

- Uncertainty modeling: both aleatoric/epistemic uncertainties seem relevant

- Active Learning: can a model influence new data, and therefore its own further learning, in some way? How to leverage uncertainty effectively?

- Domain adaptation and robustness: making the model robust to the changing physical environment (a very usual problem in real world applications). Performance may otherwise deteriorate.

- Few-shot and Zero-shot learning — will current approaches scale, do they require new algorithms, or will we see larger models produce that ability (as we’ve seen in the language domain: Language Models are Few-Shot Learners)

- Unsupervised and Self-supervised learning (are they currently more useful for OOD/DA and reducing labelling requirements, rather than improving recognition accuracy)

Crucially, building a large, curated dataset is a key aspect of what we do — it involves various challenges for capturing, storing and organizing the image data. It’s a whole universe of interesting engineering problems, but more on that in a separate post.

We are also looking for experienced DL Research Engineers, who are deeply interested in these problems. If you are one of them — contact us!